table of content

- Introduction

- What Is Context Engineering in AI?

- The Shift: From “Input” to “Environment”: Prompt Engineering Vs Context Engineering

- The Building Blocks of Context Engineering

- System Instructions

- User Input Augmentation

- Retrieval Augmented Generation

- Tool Use and Function Integration

- Memory Systems

- Conversation History Management

- Context Compression

- Real-World Results of Context Engineering

- Why Context Engineering Matters in 2026?

- Expanding Context Windows: Relevance Over Volume

- Rise of AI Agents and Complex Workflows

- Closing the AI ROI Gap

- Context Engineering as a Business Capability

- The Future of Context Engineering

- Final Takeaway

Context Engineering: The Next Evolution After Prompt Engineering

Introduction of Context Engineering in AI

Over the past year, a certain pattern of failure has evolved for firms using Large Language Models (LLMs). You have probably seen it: the output is inconsistent or contextually thin, despite the system instructions being well written and the logic sound.

The industry is beginning to realize that these aren’t failures of intelligence, but failures of information. We have reached the functional limit of what a standalone prompt can achieve.

2026 has seen a change in the strategic focus. The period of Prompt Engineering, or the art of wording, is giving way to Context Engineering, or the science of environmental design. As we move from basic chatbots to self-governing AI entities, “asking the right question” is no longer sufficient. The current goal is to give the model the memory, tools, and libraries it needs to accurately carry out high-stakes business logic.

In this blog, we’ll break down what context engineering is, why it matters, and how businesses can use it to build more reliable and scalable AI systems.

What Is Context Engineering in AI?

Context engineering is the practice of structuring, managing, and delivering the right information to AI systems so they can generate accurate, relevant, and reliable outputs. Instead of relying only on prompts, it focuses on the complete information environment including data, memory, workflows, and tools that support AI performance in real-world business scenarios.

For organizations, context engineering plays a critical role in improving decision-making, reducing errors, and ensuring consistency across AI-driven processes. It enables systems to work with meaningful, up-to-date information rather than generic or incomplete inputs.

If you’re exploring ways to improve AI outcomes, understanding how AI systems work and implementing retrieval-based data strategies can significantly enhance results. By prioritizing structured context, businesses can move from basic AI usage to scalable, high-impact solutions that deliver measurable value across operations, customer experience, and analytics.

The Shift: From “Input” to “Environment”: Prompt Engineering Vs Context Engineering

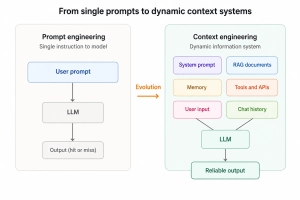

The fundamental difference between these two disciplines lies in how we view the model’s role in the enterprise:

Prompt Engineering was a linguistic exercise. It relied on “tricking” the model into better performance through specific formatting, persona adoption, or repetitive instructions. It was a manual, often fragile, way to guide an LLM.

Context Engineering is a systems design exercise. It is the architectural work of building a dynamic “working memory” for the AI. It involves orchestrating data pipelines (RAG), managing long-term memory (Vector Databases), and providing operational capabilities (Tool Use/MCP).

To understand the shift, consider a professional accountant.

- Prompt Engineering: Asking the accountant, “Can you calculate my taxes for 2025?”

- Context Engineering: Handing the accountant your bank statements, receipts, previous year’s returns, and a calculator, and then setting them up in a quiet office with a secure connection to the IRS database.

Context engineering ensures the AI doesn’t just have an instruction; it has a working environment.

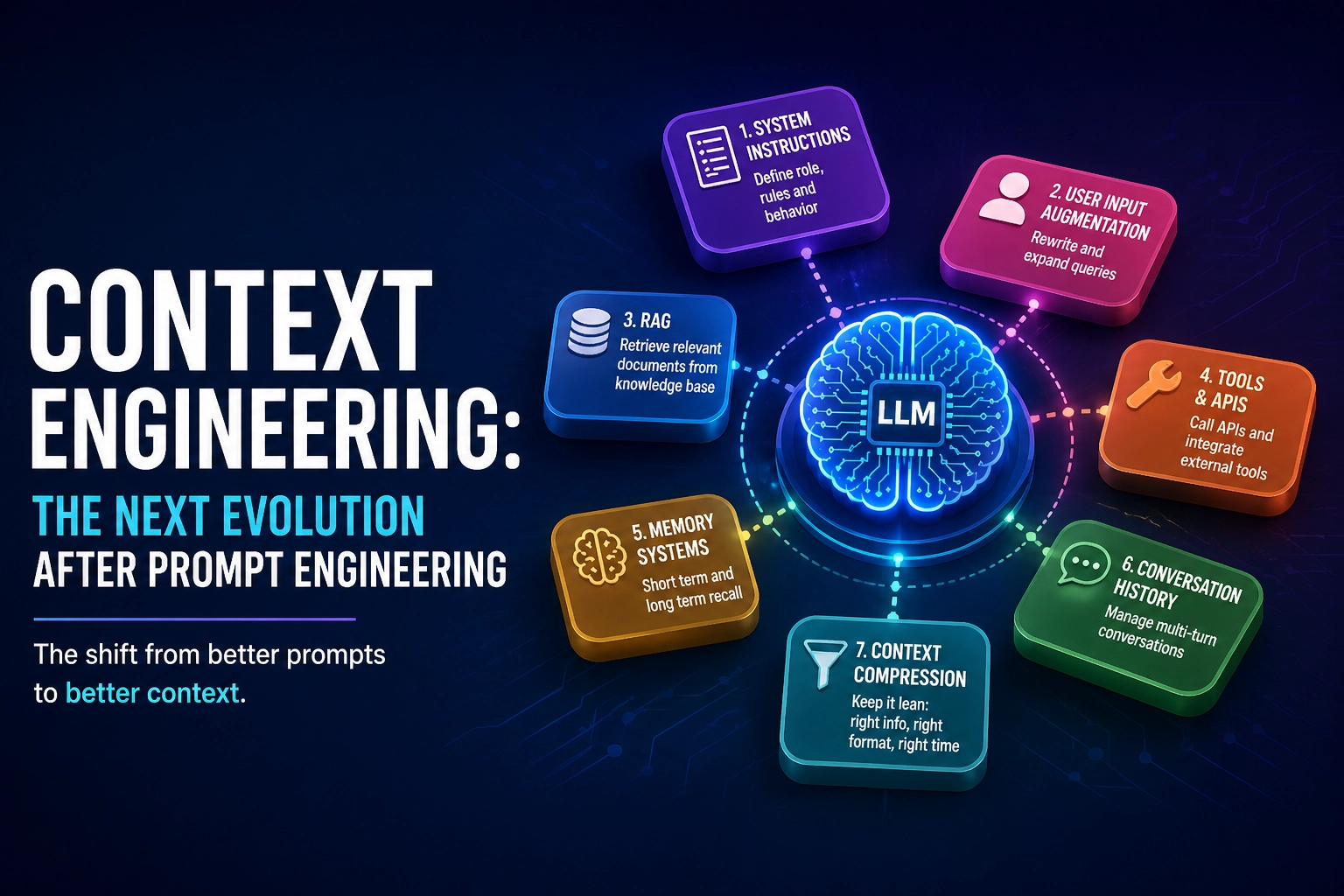

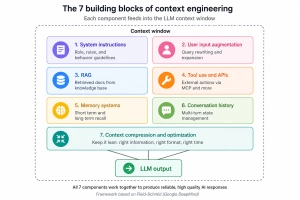

The Building Blocks of Context Engineering

So what does context engineering look like in practice? Whether you’re in healthcare, finance, SaaS, retail, or operations, the underlying principles remain the same.

Leading practitioners outline seven core components that form the foundation of effective context engineering. These components help organizations design AI systems that are reliable, scalable, and aligned with real business needs.

The Building Blocks of Context Engineering

System Instructions

System instructions define the role, purpose, and behavior of the AI. Think of this as creating a job description for your AI system. It answers key questions like:

- What is the AI responsible for?

- What tone should it use?

- What boundaries should it operate within?

For any business, this ensures that the AI behaves consistently and aligns with organizational goals. The key is balanced with clear enough to guide behavior, flexible enough to adapt to different situations.

User Input Augmentation

In most real-world scenarios, user inputs are incomplete or unclear. Context engineering improves this by:

- Clarifying intent

- Adding missing details

- Structuring the request

For example, instead of acting on a vague input like “help me with this issue,” the system refines it into something actionable with a clear context. This step is critical across industries because it reduces ambiguity and ensures the AI works with well-defined, meaningful inputs.

Retrieval Augmented Generation (RAG)

RAG enables AI systems to pull in relevant information from external sources such as:

- Internal documents

- Knowledge bases

- Databases

This allows the AI to provide responses based on actual, up-to-date information, rather than relying only on pre-trained knowledge. A key best practice here is “just-in-time retrieval” bringing in only the most relevant data when needed, instead of overloading the system upfront.

Tool Use and Function Integration

Modern AI systems are not limited to generating responses; they can also take action. This includes:

- Accessing external systems

- Fetching or updating data

- Triggering workflows

For businesses, this means AI can move from being a passive assistant to an active participant in operations, helping execute tasks rather than just suggesting them.

Memory Systems

Memory enables AI systems to maintain continuity and improve over time. There are three key types:

- Short-term memory: Information within the current interaction

- Long-term memory: Historical data across interactions

- Knowledge memory: Stored facts and domain information

Across industries, memory helps AI deliver more personalized, consistent, and context-aware outputs.

Conversation History Management

As interactions grow, managing past context becomes essential. Instead of storing everything, effective systems use:

- Selective retention of important details

- Summarization of previous interactions

- Rolling or “sliding” context windows

This ensures the AI retains what matters most while avoiding unnecessary complexity.

Context Compression

One of the most important principles in context engineering is More information does not equal better results. Providing too much or irrelevant data can reduce accuracy and performance. Context compression focuses on:

- Prioritizing relevance

- Removing noise

- Delivering concise, high-quality inputs

The goal is to ensure the AI works with focused and meaningful context, regardless of the industry or use case.

Real-World Results of Context Engineering

Context engineering is no longer theoretical, it is delivering measurable business outcomes across industries. Organizations that structure the right information for AI systems are seeing improved accuracy, faster execution, and more consistent outputs. The shift from prompts to context is directly impacting performance at scale.

Businesses implementing context engineering report fewer operational errors and better decision-making. When AI systems are provided with relevant data, historical inputs, and structured workflows, they perform more reliably. This leads to increased productivity and reduced dependency on manual corrections across teams.

Another key benefit is improved output quality. AI systems that operate with strong contextual foundations produce responses that are more aligned, actionable, and trustworthy. This builds confidence in AI adoption and enables organizations to scale usage across multiple business functions effectively.

Real-World Results of Context Engineering

Why Context Engineering Matters in 2026?

Expanding Context Windows: Relevance Over Volume

AI models today can process significantly larger context windows, but more data does not guarantee better results. When irrelevant or excessive information is included, it often reduces clarity and accuracy. Context engineering ensures that only meaningful, high-value information is provided, improving performance.

Rise of AI Agents and Complex Workflows

The shift from chatbots to AI agents has increased the need for structured context. These systems perform multi-step tasks, interact with tools, and maintain continuity. Without proper context management, their performance declines. Context engineering enables seamless execution across workflows and improves system reliability.

Closing the AI ROI Gap

Many organizations are investing heavily in AI but struggling to see measurable returns. One major reason is fragmented or poorly structured data. Context engineering bridges this gap by aligning data, workflows, and AI systems, helping businesses achieve better efficiency, accuracy, and overall ROI.

Context Engineering as a Business Capability

Context engineering is rapidly becoming a core business capability, not just a technical concept. As organizations integrate AI into daily workflows, the quality of results depends on how well the input context is structured and delivered. Without the right information, even advanced AI systems can produce inconsistent or unreliable outputs.

To make AI truly effective, businesses need to focus on organizing data, retrieving relevant information, integrating workflows, and maintaining context across interactions. At the same time, domain understanding plays a crucial role in identifying what information actually matters.

What makes context engineering valuable is that it is not limited to technical teams. Professionals across the organization, marketing, operations, and analytics can use it to improve AI performance, enhance decision-making, and drive more consistent, scalable outcomes across the organization.

The Future of Context Engineering

Context engineering is rapidly becoming a foundational layer in AI development. As systems evolve, more automation will be introduced to optimize context dynamically. AI systems will become better at selecting relevant information and improving their own performance over time.

Advancements in memory systems, retrieval methods, and AI orchestration will further enhance how context is managed. Many platforms are already integrating built-in context engineering capabilities, making it easier for businesses to implement these practices at scale.

Final Takeaway

Context engineering represents a shift from experimenting with AI to making it truly effective in real-world scenarios. It ensures AI systems deliver consistent, high-quality outcomes at scale.

For businesses, the focus should be clear: prioritize relevant information, structure context effectively, and design systems that support AI performance. This approach is key to unlocking long-term value from AI investments.

Transform Your AI Performance with Context-Driven Solutions by Codestore

Ready to move beyond prompts and build AI systems that actually deliver results? At Codestore, we help businesses design and implement powerful context engineering strategies that improve accuracy, efficiency, and scalability. From structuring data pipelines to integrating AI into real workflows, our solutions are built for real-world impact, not experiments.

Whether you’re just getting started with AI or looking to optimize existing systems, our team can help you unlock true business value. Let’s turn your AI from a tool into a competitive advantage.

Get in touch with Codestore today and start building smarter, context-driven AI solutions.